3D Game Engine

For several years, we have been experimenting with building realtime rendering pipelines, with a focus on optimization for games.

The current iteration makes heavy use of deferred and physically-based shading techniques. A great deal of effort is being put into implementing high quality post-processed effects, including a variation on screen-space ambient occlusion, deferred dynamic soft shadows, image-based temporal antialiasing, deferred decals and parameter mapping, depth of field, etc.

A game using this engine is planned for release in the future.

The above image shows a few of the parameters used in our deferred rendering pipeline. Shown are the composited image (top left), depth map (top right), normal map (bottom left), and roughness map (bottom right). Materials are represented using a roughness/metalness/base color model, derived from the Disney approach.

The engine has fully dynamic lighting, capable of rendering many spotlights, sunlight and omnidirectional lights in realtime. Below is an example of how lighting interacts with the material model, in which each sphere has a different uniform roughness value. Further examples can also be seen on the curtains and floor in other screenshots.

Early Experiments

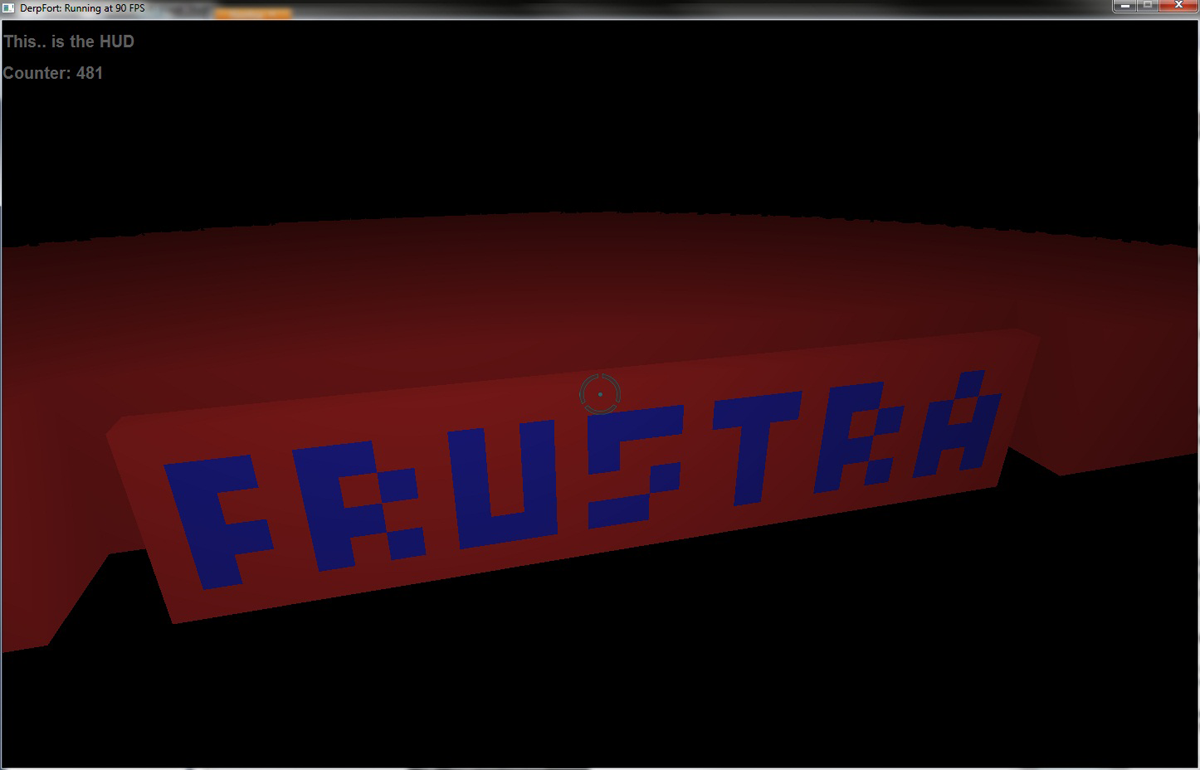

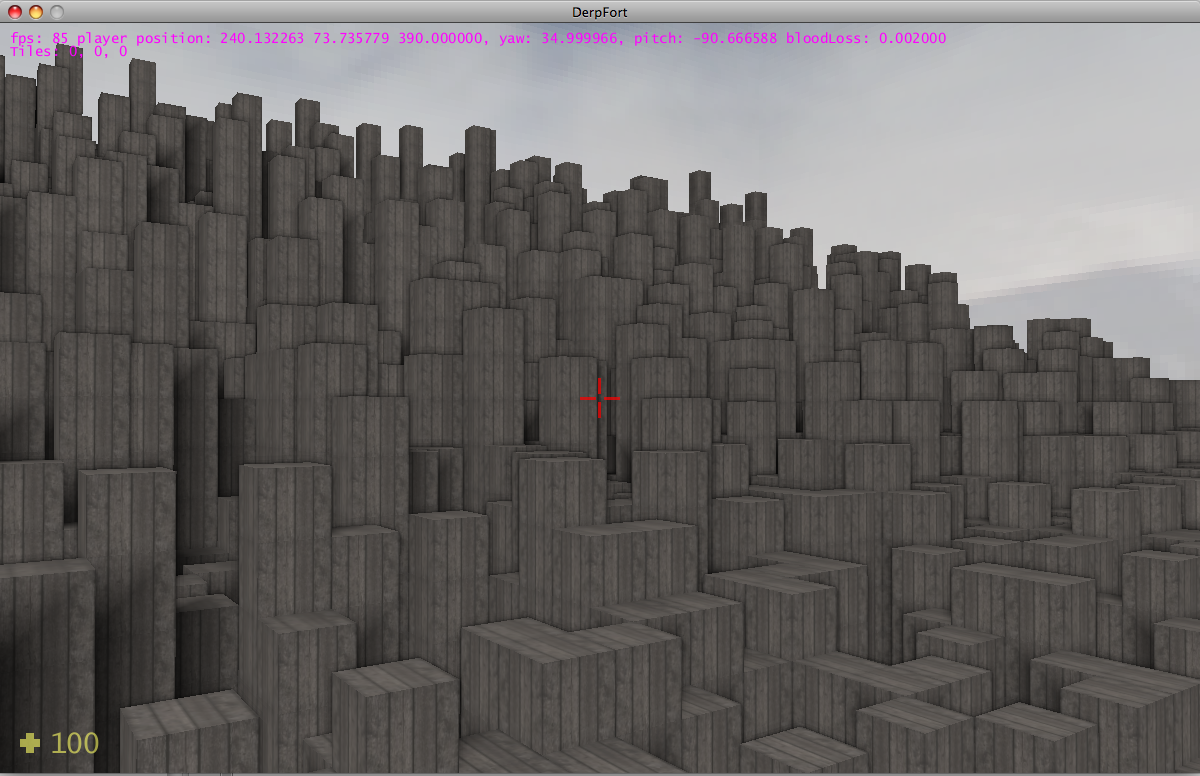

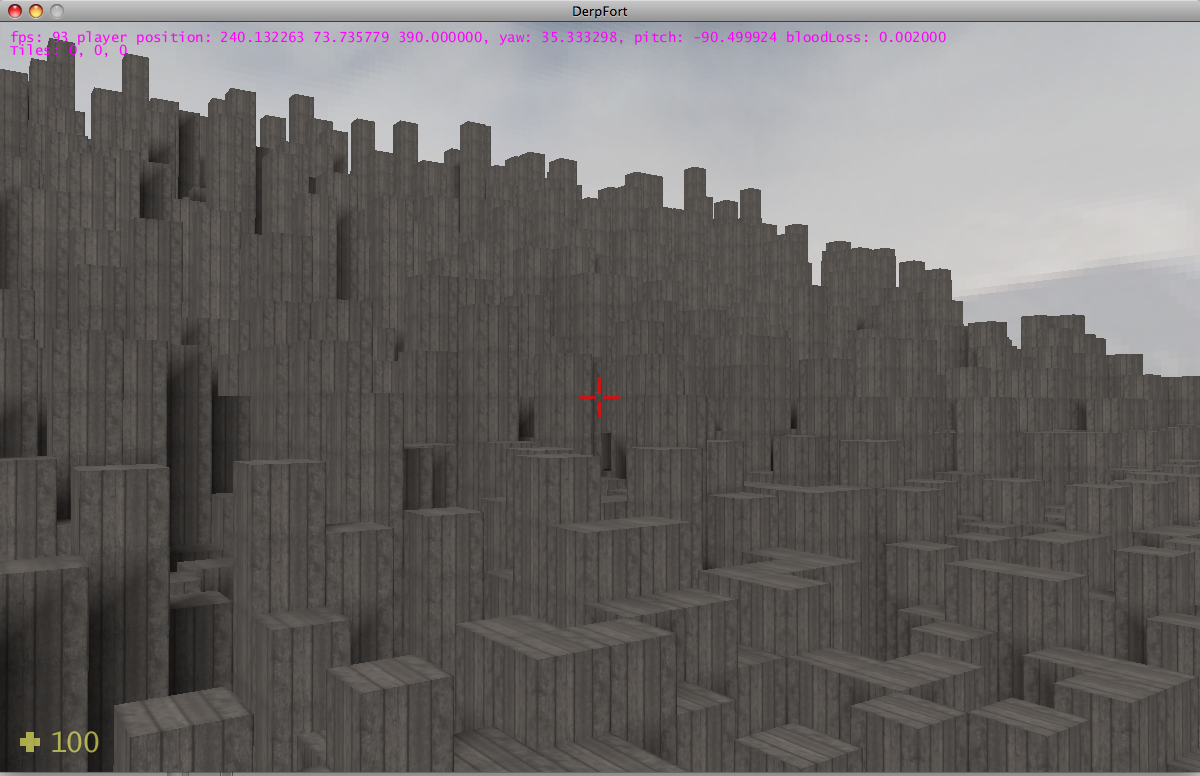

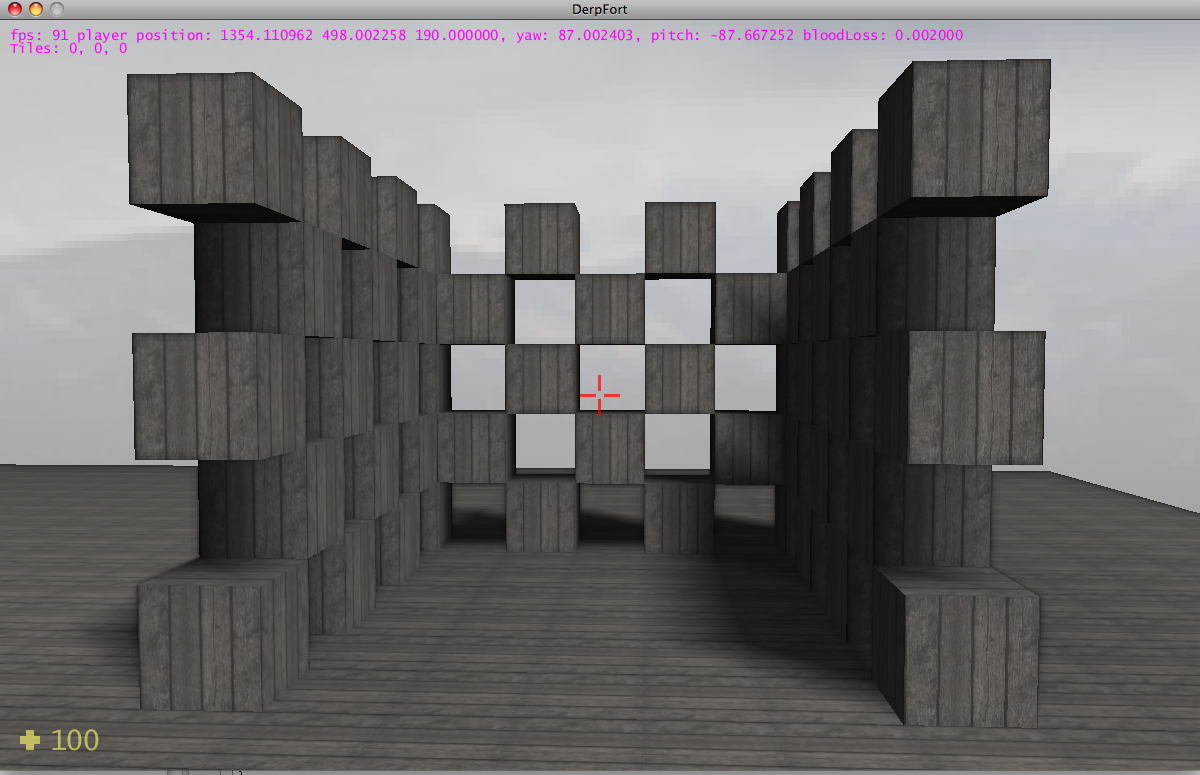

In the past, we have developed other rendering engines that focused on different things. The first of which (code named "Derp Fort", written in 2010) forcused on rendering a voxel based world, which was inspired by Minecraft at the time. Here are some screen shots of the original engine, using vertex based lighting and a basic SSAO shader for lighting.

The second iteration of Derp Fort was more hardware-oriented, and rendered voxels using a raytracing method implemented entirely on the GPU.